Advancing Cancer Research with Deep Learning Image Analysis

AACR 2019 Annual Meeting in Atlanta: Aiforia Inc. and Dr. Peter Westcott from the Tyler Jacks laboratory at MIT presented the results of their collaboration.

Since the work of Rudolf Virchow and his successors, medical image analysis has been at the forefront of medical research. Cancer care and treatment still depend on visual diagnosis of biopsy specimens, often at multiple points of the care cycle. Recent developments in deep learning neural networks have launched a new era of automated semi-intelligent medical image analysis. Coupled with the phenomenal explosion in GPU computational power, these advances have made complex cellular features, first recognized by Virchow, amenable to automated analysis workflows.

Since the work of Rudolf Virchow and his successors, medical image analysis has been at the forefront of medical research. Cancer care and treatment still depend on visual diagnosis of biopsy specimens, often at multiple points of the care cycle. Recent developments in deep learning neural networks have launched a new era of automated semi-intelligent medical image analysis. Coupled with the phenomenal explosion in GPU computational power, these advances have made complex cellular features, first recognized by Virchow, amenable to automated analysis workflows.

Just as pathologists are trained to recognize a wide variety and complexity of disease states through pattern recognition, guided by the knowledge of modern medicine, and vetted by certifications, boards and second opinions. We can approach deep learning anatomical pathology solutions with the same process and rigor. Precise medical definitions, or ‘ground truths’ as they are known in image analysis sciences, are equally essential to training AI as to a human pathologist. Due to inherent errors, the outcome of training is always worse than the ground truth provided. Improvements arise as skilled individuals adjust the ground truth based on new data. One of the major advantages of computational pathology is elimination of inter-operator variability, facilitating a more consistent recapitulation of ground truth.

One of our approaches to medical image analysis is to train multi-class segmentation and quantitation algorithms using deep neural networks combined with an efficient user interface. The user interface is intended to be navigated by skilled domain experts that can provide ground truth. By enabling these domain experts to immediately interface with the neural network training without the need for support from IT resources or computational scientists, they can independently become the leaders we need in this new era of technology. The training approach is based on teaching the neural network to classify features much like a human would, by presentation of examples of ground truth. A robust training framework that provides rapid, iterative feedback on the precision of annotation and neural network training progress speeds up the algorithm generation cycle. This allows robust and generalizable networks to be developed rapidly from the least amount of input data possible.

One of the benefits of a fully automated image analysis workflow is that complete analysis of whole slide images (WSIs) in large series becomes feasible. Instead of performing case level classifications or estimations by sub-sampling, the ability to exactly and tirelessly analyze the entirety of the dataset improves data output, especially in the research and drug development setting. Heterogeneity of disease and response to treatment makes single classification quantification strategies difficult. This has been highlighted in personalized treatment strategies, especially in recent immunotherapy modalities.

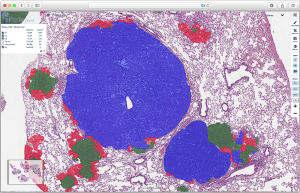

One example is the widely-used genetically-engineered mouse model of non-small cell lung cancer (NSCLC) developed by the laboratory of Tyler Jacks at the Koch Institute at MIT. This model harbors conditional alleles of mutant KRAS and TRP53 (KP), faithfully recapitulating the major genetic and histopathological features of the human disease, including progression from early adenoma to metastatic adenocarcinoma. One feature of the KP model is multifocal and heterogenous tumor burden. This allows for detailed analysis of heterogeneity in response to treatments and manipulation of driver genes, but at the same time demands careful and labor-intensive quantitation of tumor grade and burden. Recent efforts to understand the immune involvement in NSCLC development combines the KP model with modulation of immune response and requires analysis of tumor kinetics and evolution on the WSI level. Thus, the model is an ideal candidate for automated tumor grading and burden quantitation by intelligent neural networks.

We trained a convolutional neural network (CNN) algorithm for semantic multiclass segmentation using the Aiforia platform. The algorithm was trained to quantitate tumor burden and grades 1 through 4, ranging from early hyperplasia to invasive carcinoma. The performance of the algorithm was evaluated by scoring against WSI annotations of an independent data set by an expert human annotator. Grade specific F1 scores were 89%, 97%, 99%, and 98% for grades 1, 2, 3, and 4, respectively. The total area error was 0.4%, 0.2%, 0.4%, and 0.1%.The flexibility of the approach is further evidenced by additional CNNs with the ability to faithfully identify and quantitate SCLC and infiltrating immune cells in both SCLC and NSCLC.

The algorithm has been fully deployed at the academic site, and early feedback suggests universal satisfaction with the ability of the algorithm to perform accurately and consistently at scale and across users. Not only does it increase the throughput of the laboratory, but also increases the cross-study comparison potential, as the analyses are performed by the same AI operator, which never tires.

An inevitable real-world effect of larger scale deployment is end user disagreement, however slightly, with the algorithm, potentially due to shifts in ground truth. Our preferred solution for this situation is to rapidly retrain, validated and deploy a derivative version of the algorithm. Thus, we retrained and deployed a derivative version that corrects for a specific change that occurs with deletion of the tumor suppressor Keap1 in the KP model, where the boundary of G2 and G3 drifts due to an early acceleration of grade 2 tumors. This led to a slight shift in the apparent human ground truth that the algorithm was not originally trained for. The derivative model was retrained and tested against the deviant project and the original validation data, proving no drift on original capabilities but showing new skill in correctly classifying grade 2 tumors with features of early acceleration.

We were thrilled that out of the numerous groundbreaking studies in cancer research from the global AACR community, we were given the opportunity to share these findings with MIT as well as insights on Aiforia’s Deep Learning pathology solutions. Book your free demo now.

Thomas Westerling-Bui, PhD

Senior Scientist, Business development

Aiforia Inc.

Book a demo in North America: https://calendly.com/aiforia-america/demo

Book a demo in Europe and rest of the world: https://calendly.com/europe-row/demo